In the last post I argued that coordination is commitment—a vow to avoid futures with undesirable outcomes.

I also raised a question: when coordination is required, how much do you need?

Let's start counting.

My interest in this topic started in distributed systems and databases, but in this post I want to look at commitments through a lens that everybody can understand: games.

In competitive games there's a well-known strategy called playing for complications: deliberately steering the game so your opponent faces more hard choices. It turns out this idea has a precise connection to commitment counts. But as we'll see, the lesson for systems isn't about your opponent's complications. It's about your own.

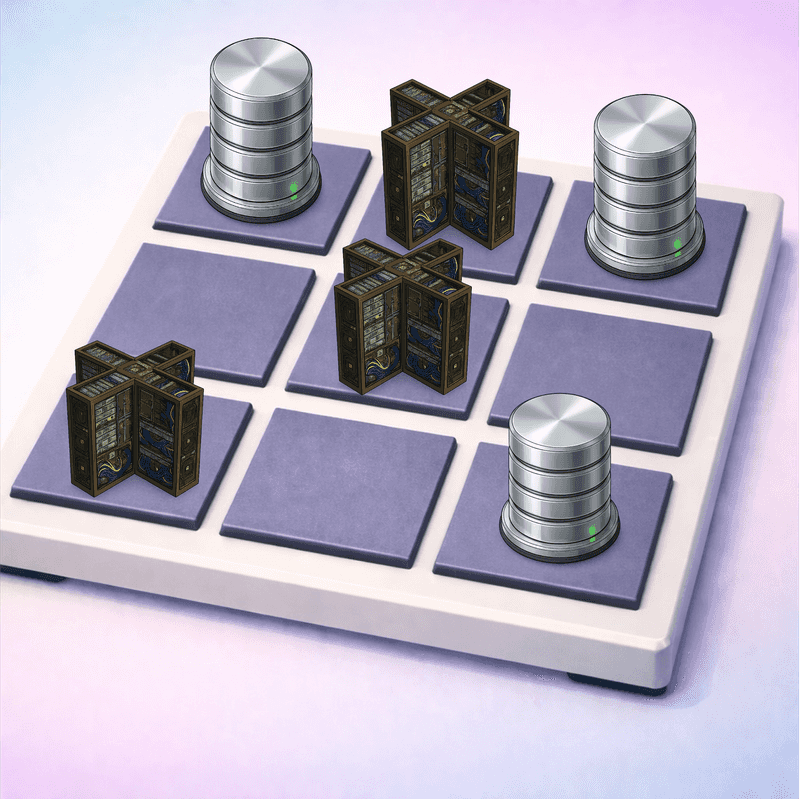

Tic-Tac-Toe as a Commitment Problem

Consider tic-tac-toe from 𝗫's perspective. The board starts empty. The game's specification — the set of admissible outcomes — is the set of all reachable final board configurations from that point in history. 𝗫's job is to extend history to narrow that set, move by move, until only winning configurations remain — or at least, no losing ones.

Each move 𝗫 makes is a commitment in history. Placing an 𝗫 in the center square permanently eliminates every future board configuration in which the center is empty or occupied by 𝗢. That's irrevocable. You can't un-play a move.

𝗢's moves are environment events in history: they further narrow the set of reachable configurations, but 𝗫 doesn't control them. 𝗫 commits; the environment responds; 𝗫 commits again.

The game is a sequence of alternating commitment and environment events. And the commitments are sequential: each move depends on what the board looks like after the previous moves (both 𝗫's and 𝗢's). You can't play your third move before you've seen the response to your second. The sequential dependencies are forced by the structure of the game.

So tic-tac-toe has a move depth of (at most) 5 — that's the maximum number of 𝗫 moves in a game. But not all of these moves are genuine commitments. As we'll see, some are forced — and a forced move doesn't narrow the admissible outcomes at all. The commitment depth may be lower.

Not All Moves Are Created Equal

Let's dig in, because this is where it gets interesting. Not every 𝗫 move is equally "committal."

In some board positions, 𝗫 has only one good move; the rest all lead to losses. That's not really a choice. 𝗫 is forced. It's not formally a commitment: there's only one admissible option, so no outcomes are being excluded, no futures are being ruled out. If the game were on a computer, it would "auto-move" for you at that stage, since no decision is available.

We noted last time that you don't need a wedding when there's only one eligible match, and you don't need a commitment when there's only one viable move.

In other positions, 𝗫 has multiple moves that are all equally optimal: they all guarantee at least a draw against perfect play. That's where the real commitment happens. 𝗫 must choose among several futures that are all fine, and the choice permanently excludes the others. The commitment burns futures that were genuinely viable.

The interesting depth of a game isn't the total number of moves. It's the number of moves where the player actually has to make a non-trivial choice — where multiple options are equally good and the player must commit to one.

The Fallible Opponent

Against a perfect opponent, it doesn't matter which of the equally-good moves you pick. They all lead to the same outcome (a draw, in tic-tac-toe). So the "real" commitment depth — the number of non-trivial choices — is irrelevant against perfection.

But opponents aren't perfect. This is where commitment depth starts to get really interesting, and where we arrive at the idea of playing for complications.

If your opponent makes mistakes with some probability, then you should keep them playing! Prefer lines of play that force them to make more non-trivial choices. Each choice they face is an opportunity to blunder. The more genuine commitments your opponent has to make, the more chances they have to pick wrong. This is playing for complications: steering the game toward positions rich in genuine commitments (i.e. opportunities for error) for your opponent.

Think about it from 𝗫's perspective. In tic-tac-toe, every opening move is game-theoretically optimal — they all guarantee a draw against a perfect opponent. But the commitment structures they produce for 𝗢 are very different. A corner opening leaves 𝗢 with an average of 1.8 genuine commitments across the game. An edge opening pushes that to 3.2 — nearly every one of 𝗢's four moves is a real choice.

Against a perfect 𝗢, it doesn't matter. Against a fallible 𝗢, the edge opening is better — assuming 𝗫 plays perfectly. It creates more opportunities for 𝗢 to mess up. Each non-trivial choice 𝗢 faces is a coin flip that might land in your favor.

But what if 𝗫 is also fallible? Then maximizing 𝗢's commitments isn't enough — you also have to worry about 𝗫's own commitment depth. An opening that forces 𝗢 into 4 commitments but also puts 𝗫 through 4 is no better than one with 2 each. What matters is the ratio: 𝗢's commitments relative to 𝗫's.

Against a fallible opponent, prefer strategies that maximize the ratio of your opponent's genuine commitments to your own. Each commitment is a chance to blunder, and you want that chance landing on the other side of the board.

Aside: the tic-tac-toe receipts

This is worth pausing on, because it reveals a tension.

In game theory, the edge opening for tic-tac-toe is conventionally considered the "weakest" — and in a sense it is, because it gives 𝗢 the most room to maneuver. But that room is exactly what creates commitment depth. 𝗢 has more equally-good options, which means more genuine decisions, which means more chances to pick wrong.

The corner opening is "strongest" in the sense that it constrains 𝗢 the most — but that constraint means 𝗢's moves are mostly forced, and forced moves aren't commitments. 𝗢 can play on autopilot.

Here are the numbers, computed by exhaustive minimax over the full game tree (source):

| 𝗫 opening | 𝗢 commits (avg) | 𝗫 commits (avg) | 𝗢/𝗫 ratio |

|---|---|---|---|

| Corner | 1.8 | 2.1 | 0.84 |

| Center | 1.9 | 2.5 | 0.76 |

| Edge | 3.2 | 2.7 | 1.17 |

(𝗫's commitment count excludes the opening commitment (move) — that's the independent variable we're conditioning on, not a future decision to be counted.)

The ratio is the key column. Corner and center both have ratios below 1: 𝗫 commits more than 𝗢, which means 𝗫's own blunders outweigh 𝗢's. Only the edge opening pushes the ratio above 1 — 𝗢 commits more than 𝗫, so errors net out in 𝗫's favor.

A simulation with equal error rates for both players confirms this. At each genuine commitment, a player blunders with probability ε (choosing uniformly at random among all legal moves). At forced moves, both play correctly:

| Opening | ε = 0.05 | ε = 0.10 | ε = 0.20 | ε = 0.50 |

|---|---|---|---|---|

| Corner | +0.008 | +0.016 | +0.031 | +0.074 |

| Center | +0.027 | +0.050 | +0.095 | +0.184 |

| Edge | +0.028 | +0.054 | +0.096 | +0.166 |

(Values are 𝗫's net advantage: 𝗫 win rate minus 𝗢 win rate. All positive because 𝗫 moves first.)

Center and edge produce similar net advantages — both much better than corner. This tracks with the ratio: corner's ratio (0.84) is closest to 1 but still below it, and the simulation confirms it's the worst opening against a fallible opponent. Edge has the best ratio (1.17) and performs well in simulation, though center is comparable — its lower total commitment count means fewer blunder opportunities for either side, which partially compensates for its worse ratio.

So "optimal strategy" and "playing for complications" pull in opposite directions, and the right balance depends on how fallible the players are. Neither axis alone gives the whole picture.

A caveat: in tic-tac-toe, "error" is binary — a move is either optimal or it isn't, and a suboptimal move against a perfect opponent always costs the game. In a richer game (chess, Go, or a distributed system), errors come in degrees. A move might not lose outright but might shrink your margin, or increase the number of commitments you need later. The right measure of "error" in those settings isn't binary but something like expected value lost per commitment — what chess engines approximate as centipawn loss. The commitment-depth framework still applies, but the analysis of fallibility gets more nuanced.

This is a different axis than the one game theory usually emphasizes. Classical game theory cares about the value of a position — can you win, lose, or draw? Commitment depth cares about the structure of the path to that value — how many real choices does each player face along the way? Two positions might have the same game-theoretic value but very different commitment structures, and against imperfect opponents, that difference matters.

From Games to Systems

This isn't all just fun and games. The same structure shows up in computer systems, which is where I started this series. But the emphasis shifts.

Playing for complications is about the opponent's side of the board — maximizing their genuine commitments. That's a fine strategy in a game. But in a distributed system, the system is the player, and the environment (requests, delays, failures) is the opponent. The environment's moves are difficult to control. What you can influence is your own commitment depth. And commitments are expensive. A commitment in a distributed system is a coordination step: a consensus round, a serialization decision, an irrevocable ordering between tasks. Each one costs latency, and if it turns out wrong — if a task aborts — you pay again retrying it.

So the lesson from the ratio analysis carries over directly: what matters for systems is minimizing your own commitment depth. The environment will do what it does. Your job is to keep your side of the board simple.

The main lever is scheduling. When the system has a choice about which tasks to run, it should favor tasks that don't conflict with each other. Conflicting tasks force sequential commitments — you have to order them, and ordering is coordination. Non-conflicting tasks can commit independently, in parallel, with no coordination between them. The commitment depth drops.

This is why the monotone case from earlier posts is so appealing: if all operations commute, nothing conflicts, and the commitment depth is zero. No coordination needed, ever. That's the ideal. Most real systems can't achieve it everywhere, but they can push toward it — by designing schemas, APIs, and task structures that minimize conflicts, and by scheduling to keep conflicting tasks apart. There's good systems work to be done there.

Counting Commitments

What I find theoretically appealing about this view is that it gives us something to count.

Classical complexity theory counts computational steps — how long does it take to produce an answer? But many problems aren't about computing an answer. They're about choosing one admissible outcome from many. The cost isn't computation; it's commitment.

Games make this vivid. The computational cost of tic-tac-toe is trivial — any move can be evaluated in constant time. But the commitment structure isn't trivial at all. The number of genuine sequential choices, the dependency between them, the way one player's choices shape the other's — that's a real structure with real consequences, and it's invisible to classical complexity measures.

The same is true in databases and distributed systems. The computational cost of textbook protocols is typically minimal. The commitment cost — how many irrevocable choices must be sequenced — is what determines the inherent latency of the spec. And that cost is a property of the specification, not the implementation.

Are there specifications that require three sequential commitments? Ten? A hundred? Is there a meaningful hierarchy, and can we characterize it?

I think so. But that's for another post.